This article was first published in 2015.

Smart Home

With the proliferation of loT devices embedded in households under the banner of “smart home” initiatives, there is good cause for concern regarding the privacy afforded to consumers, especially when such devices share data, intentionally or not, with other devices or services. Unfortunately, this backlash might well hamper the development of intelligent systems. But there might be hope via an alternative approach beyond big fat data feeds.

Smart versus Intelligent

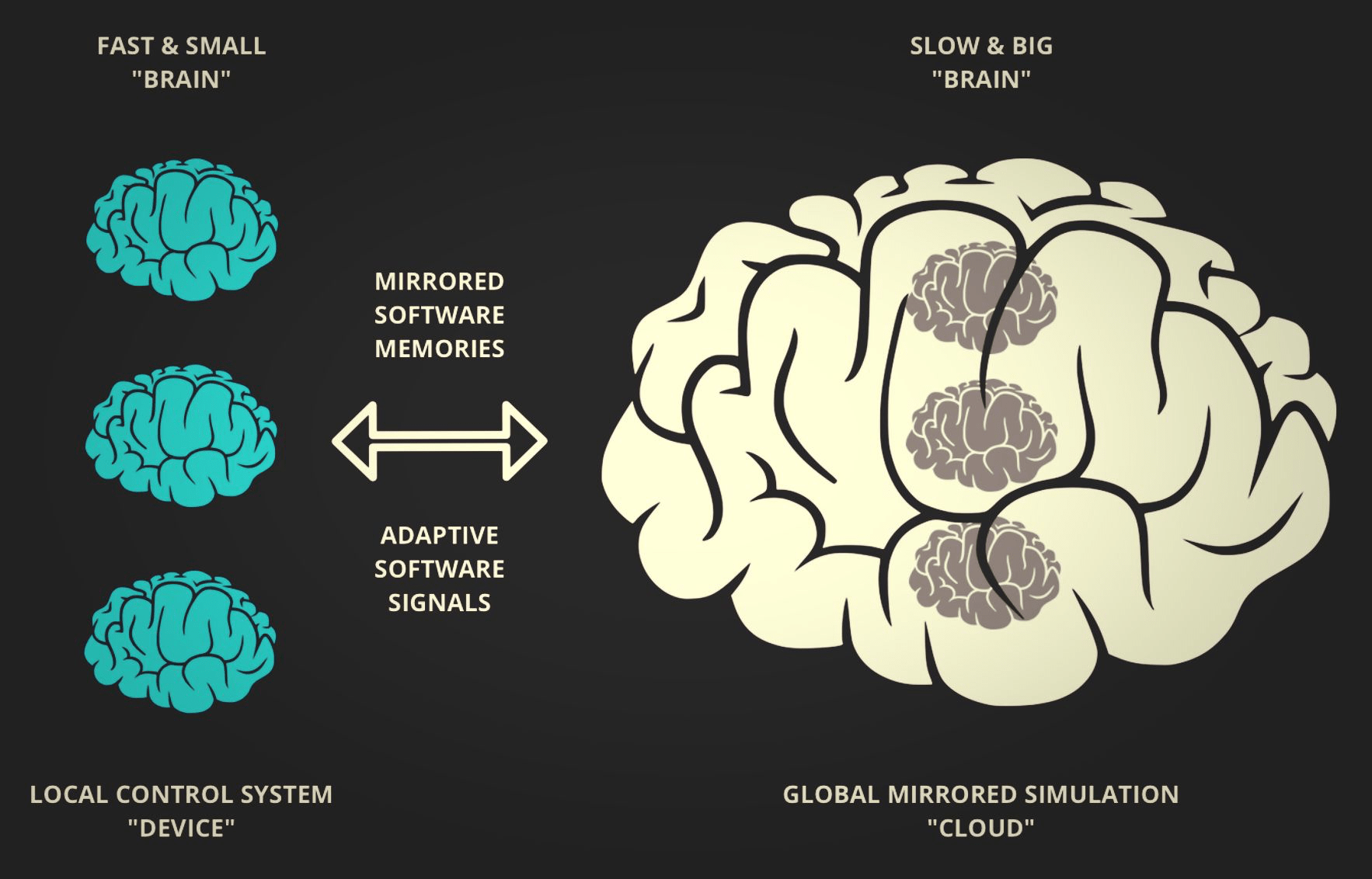

A way to distinguish between smart and intelligent is through an analogy with the brain. A smart device is much like a control system; it reacts relatively fast to local changes it observes and reasons about them over a small time frame and within a particular context or coordinate. On the other hand, an intelligent system evolves relatively slowly to remote adaptations it has deployed in response to changes in behavior and, to some extent, state or goals over a longer time frame and a broader context.

Slow versus Fast

For the slow and big brain to develop intelligence, it needs to receive stimulus from the remote, fast, and small brains and then, over time, turn this into signals that better adapt the reactive mechanisms within the fast and small brain. A collective intelligence.

Sensory versus Episodic

Today, the stimulus used to develop machine intelligence is sensory data, which is transferred between devices and the cloud – the same data that concerns many consumers. But what if instead of sending data related to such things as a thermostat’s temperature set point, what was transmitted mostly concerned the action taken by the embedded software machine – an episodic memory of the algorithm itself?

Context versus Activity

Instead of sending contextual and measurement states, possibly impeding privacy, the smart device streamed its actions and function calls in real-time to a backend simulation running in a “cloud” that mirrored many devices simultaneously.

This mirrored machine world would then allow the providers of loT devices to observe the “smartness” of their algorithms in action and to record, recall, and learn from such simulations without intruding on privacy, at least to the extent feared today.

Reactive versus Adaptive

There would still be data sharing but at a higher order – not state, but the action of control activities. The feed between the real world and the mirrored world need not be uni-directional; with bi-directional streaming between devices and the cloud software, developers could move from mostly static and reactive control algorithms to far more dynamic and adaptive ones driven by signals sent back from the mirrored world.

Local versus Global

Many control algorithms have static factors, such as hardwired parameters, that could be adjusted and adapted online by the mirrored world via signals, themselves mirrors of factors, sent back to the device. An intelligent algorithm would move from local to distributed as well as global.

Device versus Twin

A benefit of doing so would be that the device software could remain unchanged. The smartness would be achieved remotely from data shared across many mirrored devices and enhancements to the signal actuators or generators within the mirrored simulated device world.

Another benefit of this approach is that any additional sharing of observations and extension of capabilities would be performed from within the mirrored world without giving up the identity and access to the actual device itself.

In doing so, smart devices would remain lean and focused on the control aspects, with expensive computation and extended capabilities offloaded to the cloud, a mirror world of machine behavior, affording other means of communication with the consumer.

Online versus Offline

Moreover, offline device memories and simulated recollection play more naturally to the immutable and isolation needs of computation in turn, while solving one of the most significant issues found with engineering data systems – the disconnect between data collection in the form of telemetry data, as promoted by MQTT, and the perception of reality as performed by humans that is grounded in motion and action.

Waste versus Lean

We need to rethink the primacy we give to data and see it merely as a means to recreate reality in the form of machine action. When done so, data systems, their flows, and storage design will radically change. Being realistic, it will not be possible to train the big brain based solely on event data describing the sequencing of software execution void of context. There will still be a need to transmit parameterized data associated with action and flow, but it won’t be “let’s just (keep) send everything we can collect until we figure out how to monetize it [data]”!