The current focus on DORA metrics (deployment frequency, change lead time, failure rate, and restoration time) appears to be a repetition of the simplistic approach that emerged from the DevOps movement, particularly the emphasis on golden signals and service level agreements in the context of site reliability engineering.

This tendency to reduce engineering management challenges to a few metrics seems to persist. It could be argued that we have replaced the KISS principle with KISSES – keep it stupidly simplistic engineering systems.

As engineering systems grow increasingly complex, the engineering community’s focus on simplistic measurement and reporting hinders achieving operational scalability through sensemaking and adaptive steering of such systems. A thorough grasp of the interconnectedness of the situation is crucial. This understanding lays the foundation for implementing adaptive governance – policies that adjust and autonomous processes that self-manage.

Intelligence isn’t to be found in metrics. It can only be obtained when action is appropriate to the overarching context. We confuse simplistic thinking with abstraction, wherein complex phenomena and processes are reduced to rudimentary binary answers of true or false, up or down, above or below, and before or after.

While the allure of simplistic solutions is understandable, especially when dealing with complex sociotechnical systems, prioritizing them can often come at the expense of long-term effectiveness. Nuance in these systems requires a thoughtful approach that balances ease of implementation with robust and sustainable outcomes. This avoids the pitfalls of short-termism and fosters a focus on long-term gains, particularly organizational learning. Over the past three decades, I’ve been reviewing software development, and I’ve found a bigger issue. It’s like we’re always trying to climb higher and higher, but the ground keeps changing, and we have to go back down to the basics.

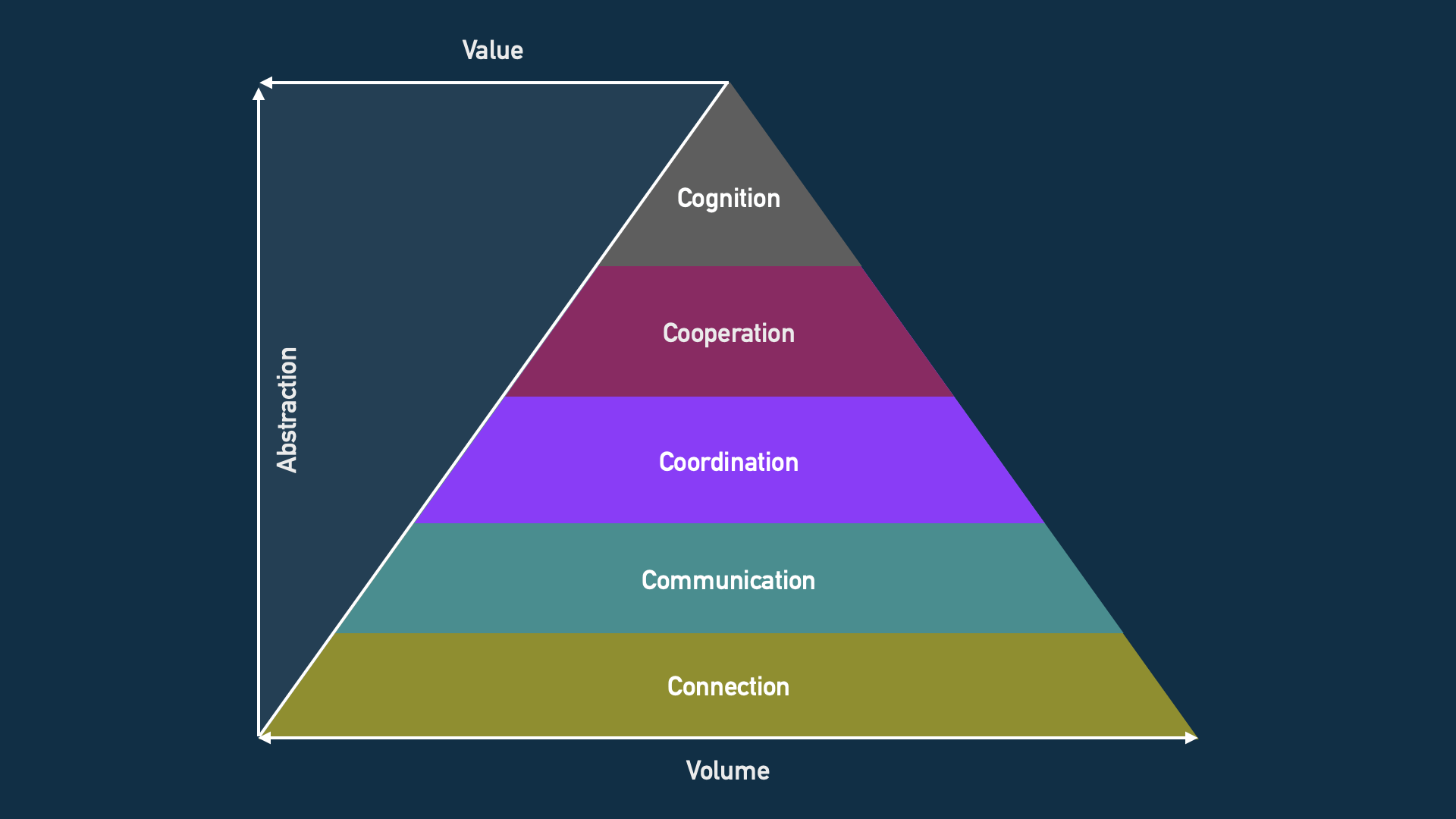

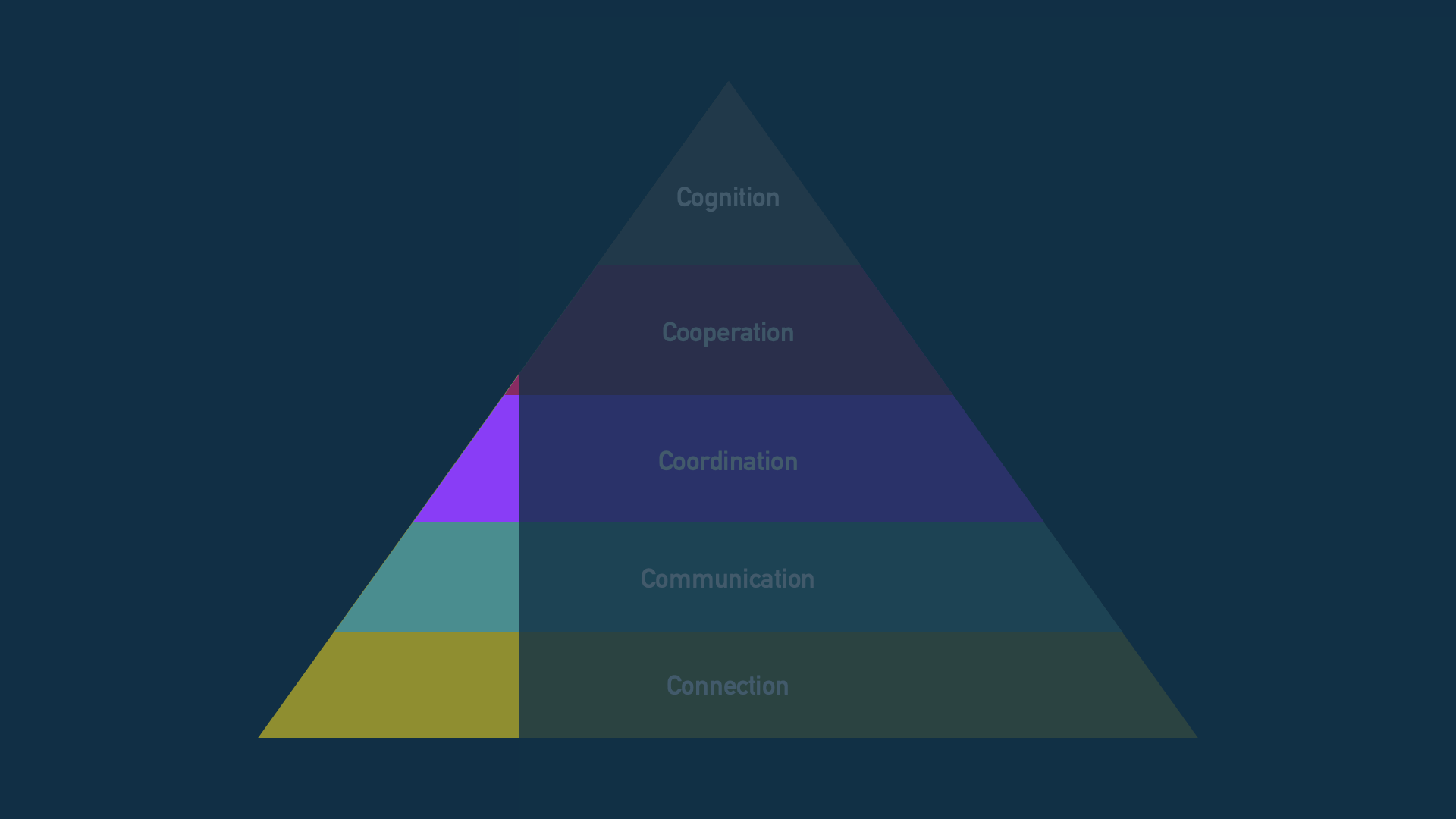

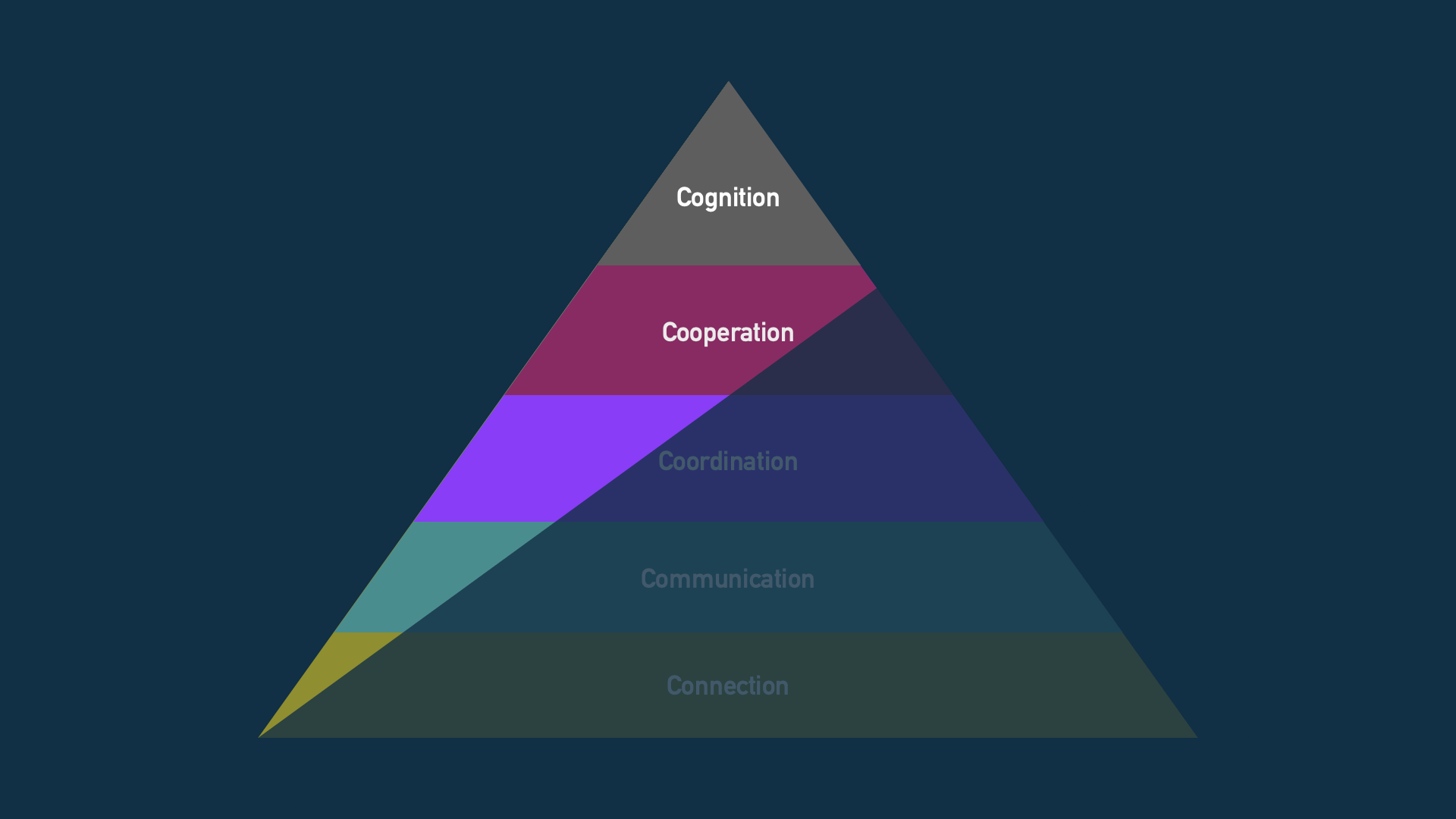

The constant maintenance within communication and connection layers devours a significant amount of engineering effort. Whenever new conceptualizations are offered, there’s often either a reluctance to embrace them or an inability to integrate them due to existing communication structures. The breakthroughs that manage to rise to the coordination and cooperation layers are usually extremely narrow in scope and highly specialized.

An example here would be intelligent networking, while from the application perspective it represents a communication layer, its conceptual capabilities within its domain place it up above the coordination and cooperation layers.

For many software and systems engineering teams, the breakdown of the design and development effort volume looks like the above. Most of the workload of humans and machines is spent on maintaining connections between systems and services and managing communication over various channels afforded by such connections.

An example of this would be the state of play in the observability space, where today most customers and vendors are still spending an enormous amount of effort moving data around from a growing number of sources and sinks over various pipelines and protocols.

Little has been abstracted in over 30 years since distributed tracing was introduced. Instead of imagining new conceptualizations of what systems and services are and how they should be sensed and steered, the focus remains on the best means of connecting and communicating measurement data. Whenever there’s an attempt to find the signal lost to noise, such as with the introduction of service cognition, the community has steadfastly remained at the lowest layer by relabeling collected data as signals. AIOps is a pipe(line) dream. Can it be fixed?

Maybe, but if it were, the redistribution of work would need to look more like the following. Perhaps this is where faster adoption of automation, self-adaptive systems, and AI are likely to lead us. Design and development will be a dialog with the machine at the level of normal social-human discourse in terms of past and present situations, possible scripts, and probable scenarios that bring us to a projected situation.

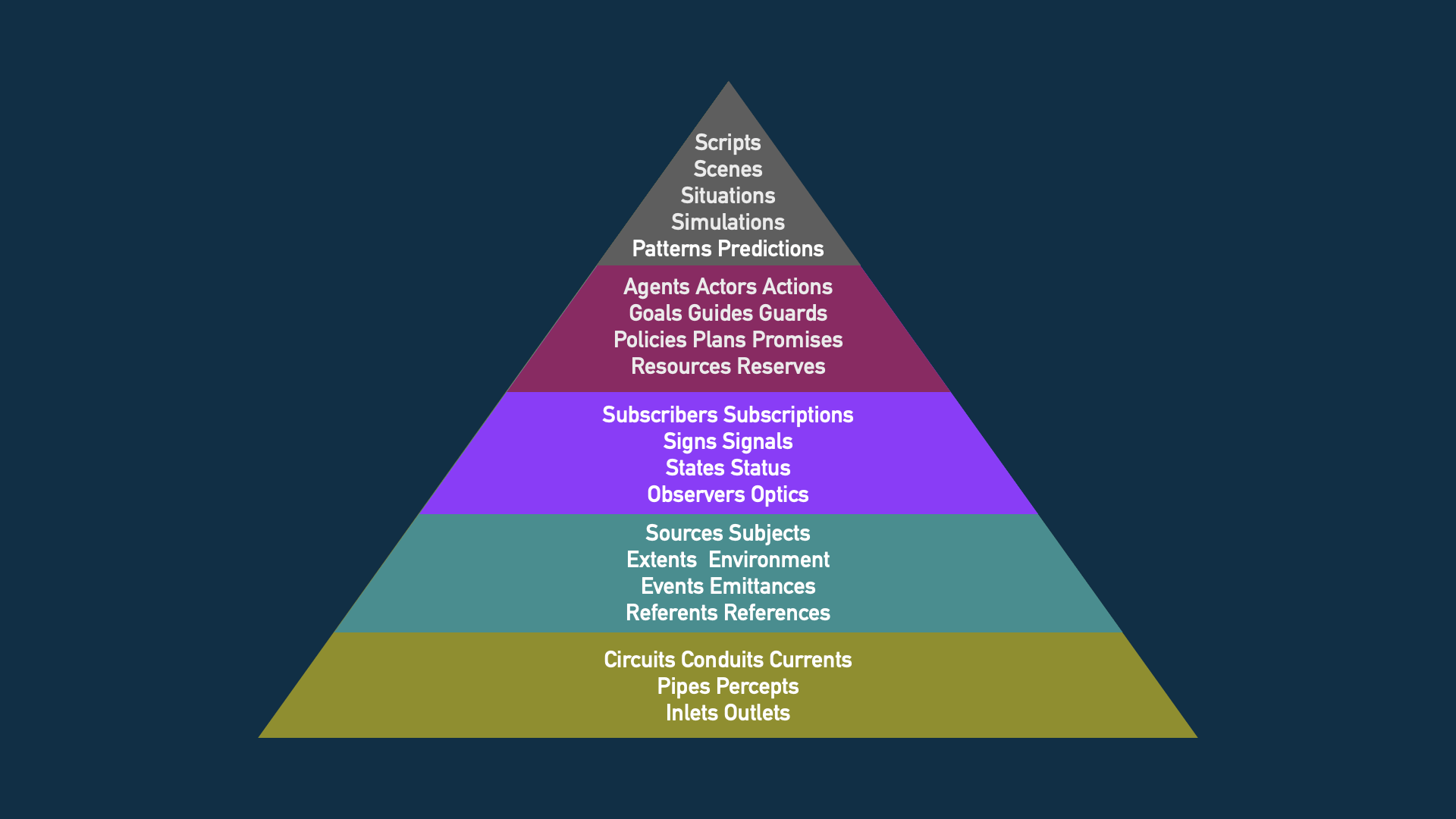

While DORA metrics offer a starting point for measuring engineering effectiveness, they lack context and ultimately prove not enough. A more critical question emerges: how do we determine the level of abstraction (layer) within which a design operates? The answer lies in understanding the domain’s specific context, which is where intelligent analysis comes in. However, some universal concepts can serve as signposts for different layers. For instance, plans, policies, and promises often indicate a design that facilitates cooperation. Meanwhile, situations, simulations, patterns, and predictions typically point towards design operating at the cognitive layer. A practical approach involves listing all the key concepts a design introduces and then mapping them to the appropriate layer. This is demonstrated by the layer mapping of interfaces within the Humainary toolkit.