Collective Memories

In 2012 I visited the Atlassian Offices in Sydney to give a tech talk on the Simz product that I had recently released, which allowed distributed systems engineers and application monitoring staff to stream, in near-real-time, the software execution behavior of one or more JVM runtimes into a single Java runtime where it was replayed as a simulation that could be inspected in such a way that it would appear the code that was executing at that time in other Java runtimes was being executed in this mirror. Everything was recreated, including threads, stacks, and frames, except for the actual bytecode within the frames (invocations). It was a fantastic technology and still is, but far too ahead of its time; it was the end game before the game started. A game we now call observability.

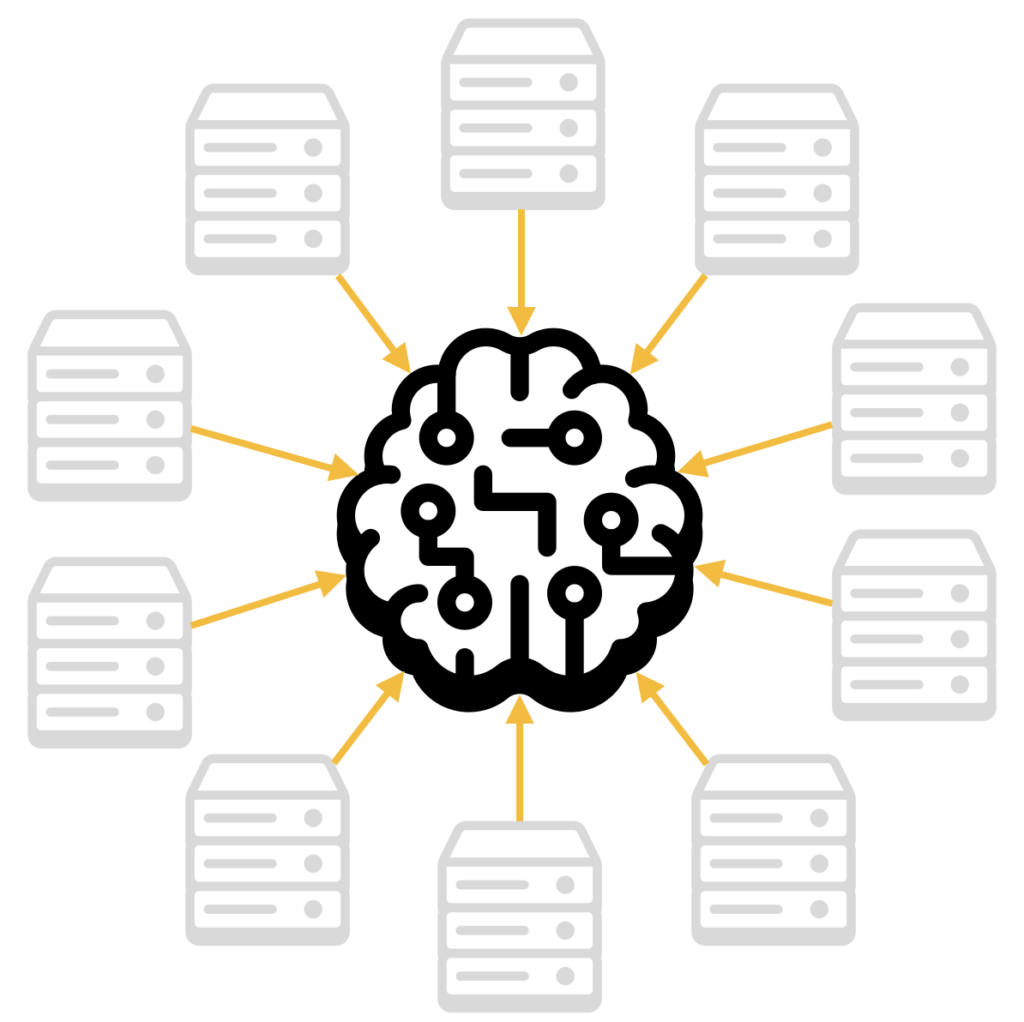

After the talk, one of the engineers at Atlassian approached me to ask about the scalability of projecting the episodic memories of many JVM runtimes into a single runtime. I detailed all the various cost optimizations built into the adaptive instrumentation, the network protocol, and the discrete event simulation engine that had allowed it to process more than half a billion events a second, fed from more than 25 JVM runtimes. He was impressed with the throughput but was more concerned about the number of JVM runtimes that could connect and communicate with the mirrored world, which I referred to as a Matrix for Machines. This was at the beginning of the microservices craze that is ongoing. I asked how many instrumented runtimes he was thinking of—his response of 75,000 initially threw me off. When I asked why, how, when, etc. I was told that every JIRA tenant had 3 JVM processes spun up when activated and that, at the time, they had 25,000 tenants. I remember feeling the buzz from the talk on machine memories leave me there and then.

I would have to revisit the implementation of the discrete event simulation engine to scale to the number of connections and mirrored threads and then try to imagine new visualizations that factored in the rendering of so many instances. I was not too concerned by the memory requirements of the namespaces (packages, class, methods) as I assumed it was a replicate of the same codebase across all 75,000 deployments. Not entirely true all the time, but close enough. I had calmed my mind when I returned to my hotel. I went to sleep feeling my dream of being able to simulate (playback) the entirety of a distributed system episodic memory within a single runtime was slipping away. I worried I would have to build another distributed system to manage a distributed system. Damn, complexity was pulling hard at simplicity. I had always expected to replicate the mirror but not slice it up with load balancers, proxies, queues, partitions, etc.

The following day I woke feeling tired, having mentally explored every conceivable distributed systems architecture in my wakeful sleep that would still allow near-real-time mirroring. This was important because distributed tracing only makes things visible after the fact. This is something that many who use OpenTelemetry find out far too late in the game. I abandoned distributed tracing in 2008 for this reason and the significant cost overhead that comes with it. I would not reopen that chapter; that would be a step back to cave painting days.

Two Paths

Then it occurred to me that I was overly preoccupied with the vast number itself and not the actual objective of monitoring. I created this machine memory mirroring technology because I wanted to enable with great ease the development of collective intelligence. But the collective was not all 75,000 processes. Each tenant was a mini-world in itself. There was no reason to install extensions within a single mirror that observed the entire population in high-definition, which comes with episodic memories. Every tenant had its usage patterns, configurations, customizes, etc., and they were not using any application-level shared resources for the most part.

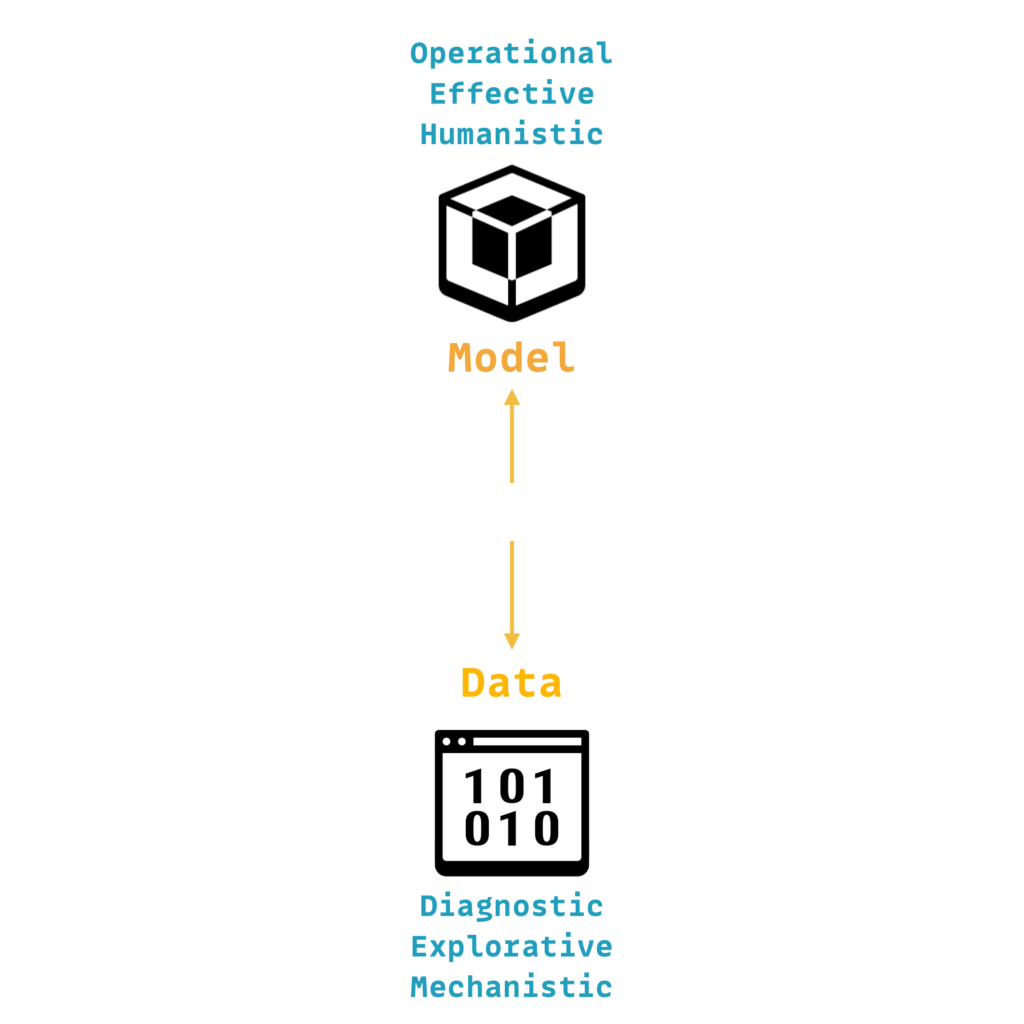

When I returned from my tour of Australia and Asia, I concluded that there are at least two distinct paths to the future of observability. One path that would continue increasing the volume of collected data in its attempt to reconstruct reality in high-definition on a single plane without much consideration for effectiveness or efficiencies. That is where we are today, with most products and solutions sold and promoted under the observability banner. Another path would focus on seeing the big picture in near-real-time, the situation, and the situations within a situation, from the perspective of human or artificial agents tasked with managing hugely distributed systems by identifying patterns and forming predictions at various aggregations and scopes. This led to Signals, then Signify, and finally, Humainary.