The abundance of metrics in modern observability ironically obscures systemic understanding. Current methods prioritize low-level measurement, neglecting the crucial synthesis of comprehensive insights from raw data.

A paradigm shift is needed, focusing on continuous, holistic assessments of system stability and confidence, visualized not as isolated numerical data points but as dynamic, intuitive representations of overall system health.

This approach leverages inherent human cognitive capabilities for complex system comprehension, directly addressing the challenges operators face in today’s complex digital environments. The field has long mistaken the precision of metrics for understanding, resulting in dashboards resembling chaotic control rooms rather than clear, actionable overviews. This isn’t true observability; it’s numerical theater, a performance of quantification that obscures a fundamental lack of insight. The flaw isn’t superficial; it’s an architectural flaw, deeply embedded in tools and practices.

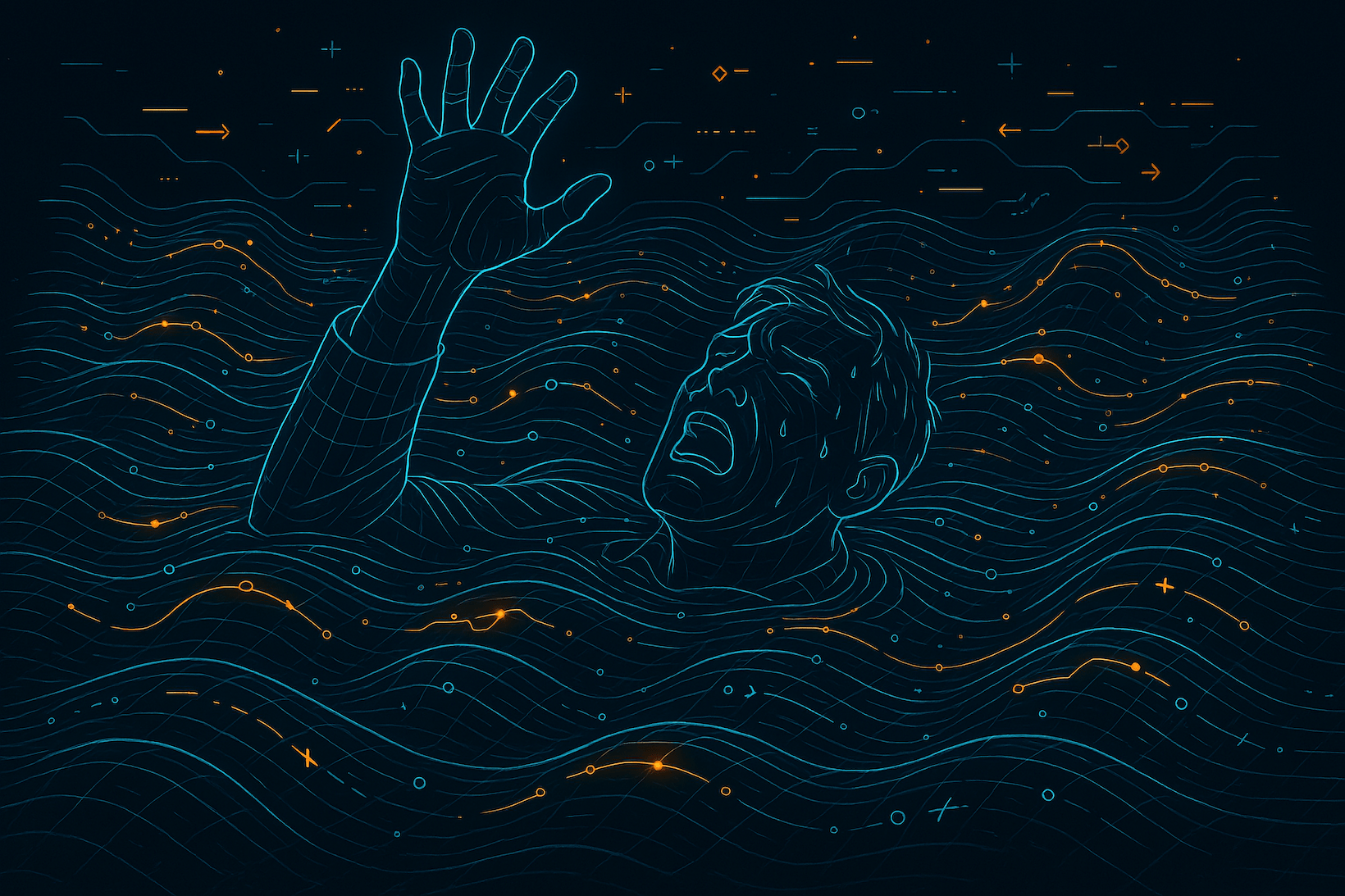

We’re drowning in our digital exhaust: petabytes of time-series data that, despite their volume, fail to answer the operator’s most pressing, almost existential, questions:

- Is the system and its sub-systems stable?

- Are patterns emerging that signal instability?

- Can I trust the representation displayed?

- Does it reflect reality, or is it a flawed measurement or incomplete data?

- What’s the epistemic integrity of this view?

- What vital functions are at risk?

This analysis reveals the inadequacy of the current metric-focused approach to cognitive ergonomics for human operators, particularly in stressful situations. We propose a shift toward a superior form of observability that prioritizes comprehension over quantification, emphasizing simplicity and clarity in complex systems.

The Downward Cascade of Quantification

Dominant observability frameworks, particularly OpenTelemetry, have inadvertently cemented a fundamental architectural inversion. The critical task of defining what’s meaningful has been delegated downwards, deep into the application code itself. Functions and services are now burdened with emitting predefined metrics, often because the layers above lacked the necessary interpretive capacity—the cybernetic “brain”—to distill meaning from the rich, raw signals of system operation. This approach has created several critical problems:

- Prematuration: Developers must decide what to measure before understanding what questions need answering

- Entanglement: Application code becomes cluttered with instrumentation implementation decisions

- Rigidity: Once metrics are defined, they’re difficult to change or reinterpret

- Reductionism: Individual metrics fail to capture system-wide patterns and relationships

The focus often degrades into debates about metric types – a form of instrumentalist fixation. This preoccupation with measurement tools distracts from the ultimate purpose of observability: gaining meaningful understanding.

It’s a classic case of mistaking the means for the end, focusing on the hammer rather than the solution it’s meant to develop and to adapt. This resonates with the need to simplify cognitive complexity, shifting focus from low-level mechanics to high-level system behavior.

The Foundational Inversion

First, we need to clear up some confusion in the industry. OpenTelemetry uses ‘signal’ to mean ‘stream of telemetry data’. But that’s like calling a weather report a ‘signal’. In semiotics, a signal isn’t the data—it’s the act of expressing something meaningful about a situation.

- A signal is a communicative act.

- It’s intentional, contextual, and often tied to state change or activity.

- It says: “Something happened” or “I want you to notice this.”

- It happens in time and has a narrative role.

- It carries meaning – a sign a subject emits to inform or affect another observer.

Signals are about causality and interpretation. A signal is a sign in motion. It points to something beyond itself (like a bark means danger, not just sound). Think of signals as semantic messages that encode intent, change, or judgment.

A metric is a question you ask. An instrument is a tool for gathering possible answers. But just because you have a tool doesn’t mean you know the right question. Metrics aren’t things you emit. Metrics are things you ask. Instruments aren’t part of business logic. Signals are. Your application should express what happened, not how to count it.

Pushing metrics downwards forces premature interpretation at the level least equipped to understand the context.

The real tragedy of current observability isn’t just that we went overboard with metrics. We went overboard in the wrong direction—downward—because the middle layer, the one that thinks, interprets, and judges, didn’t exist.

The Missing Middle

A critical architectural flaw is the absence of a dedicated interpretive layer, a cognitive engine, within many systems.

A semantic layer, comprising agents, models, and heuristics, is necessary to synthesize meaning from diverse signals across time, space, and system structure. Here, we provide the system with reflection and judgment capabilities, converting raw data into a coherent understanding. This isn’t about measurement. It’s about meaning. It’s where signals and signs are observed, compared, remembered, and modeled. Not all in one place. Not by one agent. But by a network of observers, each with:

- Scope: What do I see?

- Sensitivity: How reactive am I?

- Context: What history do I hold?

- Competence: How well do I judge?

Together, they form situational intelligence—a kind of collective cognition for your system. This is where metrics should come from—not from emitters but from sense-makers.

The True North: Condition and Confidence

A condition assessment evaluates the system’s historical, current, and anticipated situational state. It’s an underlying behavioral pattern relative to a desired state of stability or change:

- Converging: Moving towards reliable, predictable operation.

- Stable: Maintaining equilibrium within acceptable bounds.

- Diverging: Drifting away from desired states towards potential failure.

- Erratic: Exhibiting unpredictable, inconsistent behavior.

- Degraded: Functioning but impaired, fragile, or inefficient.

An assessment of a condition without confidence is a guess. Operators need epistemic strength behind their judgments. Was the assessment made from a robust model? Are the indicators aligned and reinforced? Or are they noisy, incomplete, or out-of-date? Confidence qualifies as truth. It’s what distinguishes a hunch from actionable insight. It provides the grounding for trust in human-machine interaction.

The Landscape: Observability as Ecology

Observability should evoke the feeling of watching weather patterns roll across a landscape rather than the static analysis of a spreadsheet. Instead of focusing on discrete, isolated metrics, we should aim to visualize a continuous topography depicting system stability, observe the ebb and flow of confidence and uncertainty like incoming waves, and recognize the natural, inherent patterns of oscillation and disturbance within the system’s behavior. This approach capitalizes on our innate ability to discern intricate patterns in natural settings. It has the following benefits:

- Intuitive Pattern Recognition: Humans excel at processing spatial information

- Reduced Cognitive Load: Unified visualization rather than dozens of separate metrics

- Natural Sense of Propagation: Showing how instability spreads through interconnected systems

- Temporal Awareness: Wave-like motion naturally communicates system trajectory

Accurate system representation necessitates thoughtful service placement, mirroring real-world relationships. This entails positioning services according to their interdependencies and interactions, leveraging elevation to depict hierarchical structures, utilizing natural features as component metaphors, and shaping the landscape to convey architectural information.

The observer serves as the missing functional component. It not only collects data but also actively observes, compares (to historical data and models), retains memories, models future scenarios, identifies subtle deviations, and makes judgments. It encapsulates the system’s ability for self-assessment. From a network of observers, we receive assessments that go beyond mere data points, such as “System converging,” “Service degrading,” and “Confidence declining.” This aligns with constructing more resilient systems. To implement this approach, we need:

- A means (substrates) for components (subjects) to emit signals (signs) about their behavior

- An observer collective (subscribers) that interprets signals across time and components (subjects)

- Models (past, present, and future) that define what “stable” means in different contexts (hierarchies)

- Visualizations representing system state (conditions) as a meaningful changing landscape

Conclusion: From Measurement to Meaning

The next evolution in observability transcends the mere expansion of metrics. It emphasizes elevating significance and constructing systems that not only present data but actively derive meaningful interpretations. In this approach, services should emit signals, the raw data points, rather than pre-determined answers.

The role of observers, whether human or automated, lies in interpreting these signals and forming assessments.

Dashboards, consequently, should reflect this derived understanding, presenting insights rather than solely displaying raw figures. This shift acknowledges that the most crucial metric is the system’s stability and the level of confidence we have in that assessment—all other aspects serve as supporting details. It’s imperative to restore meaning to the core of our monitoring efforts and construct systems designed for self-understanding.

The fundamental insight is profound: we’ve been working backward. Instead of commencing with the questions and progressing toward signals that elucidate their answers, we’ve initiated with metrics, anticipating that they’d assist in addressing questions that we hadn’t fully articulated. Our tooling has completely inverted the natural order.